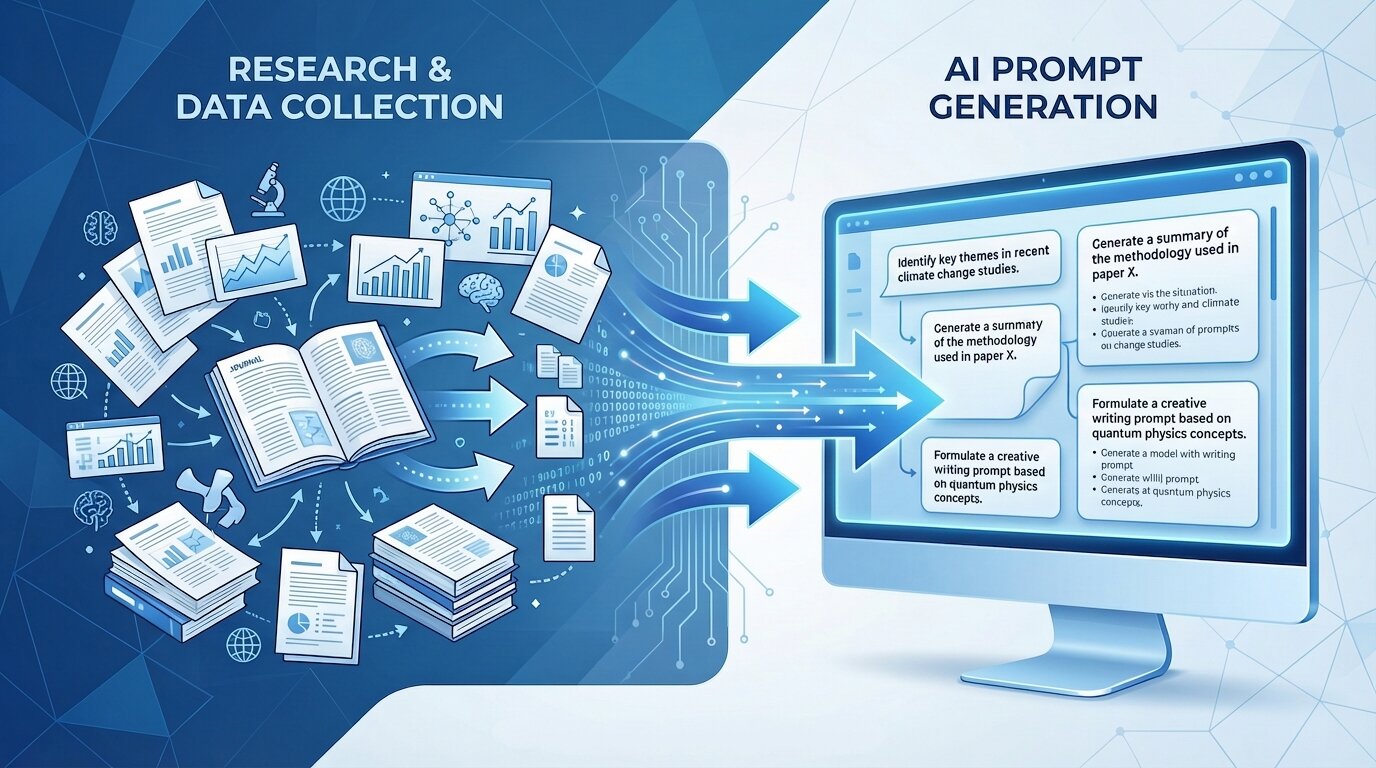

Your research sits there in a messy Google Doc or scattered notes app, full of gold. But when you feed it to AI, you get either a wall of generic fluff or something that misses the point entirely. The difference between those two outcomes is rarely the model itself. It is almost always the prompt.

Most writers and marketers I talk to hit the same wall. They either dump every research note into the chat hoping the AI will sort it out, or they keep the prompt so short and vague that the output feels like it came from a different planet. Both approaches waste time and force you to rewrite from scratch. This post changes that. It shows you exactly how to turn raw research into a prompt that delivers a structured, on-brand first draft you can actually edit instead of scrap.

Think of it this way: a weak prompt sounds like “Write a blog post about AI for marketers using my notes.” The result is predictable and flat. A strong prompt reads more like a detailed brief than you would hand a trusted freelancer: clear role, audience, goal, tone, and distilled research points. The output feels like it already understands your voice and your reader. By the end of this piece you will have a reusable system that gets you usable drafts in one or two shots instead of five frustrating rounds.

The Five Elements Every Strong Writing Prompt Needs

Every prompt that reliably produces a workable draft shares the same five building blocks. Borrow this simple template and tweak it for every piece:

You are [role] writing for [audience]. Your goal is [specific outcome]. Use a [tone description] voice. Here is the exact research to draw from:

Then list your distilled points (more on that in the next section). End with output instructions: “Structure the draft with clear H2 headings, keep each section 150-250 words, and leave [placeholders] for any stats or links I will add later.”

Let’s break the five elements down so you see why each one matters.

- Role: Tell the AI exactly who it is. “Act as a senior content strategist who has written 200+ SEO-focused blog posts for B2B SaaS companies.” This sets the expertise level and decision-making framework.

- Audience: Be granular. Not “marketers.” Try “mid-level marketing managers at SaaS companies who already run weekly campaigns but feel overwhelmed by new AI tools and want practical, no-fluff tactics they can test this month.”

- Goal: Make it measurable. “Help the reader feel confident enough to test one new prompting technique in their next content brief and see a measurable lift in draft quality.”

- Tone: Give more than one adjective. “Conversational but authoritative, direct, zero corporate jargon, occasional light humor that feels human rather than forced.” We will dig deeper into voice techniques below.

- Input: Your research, but only after you clean it up (next section).

Copy the template above into your own notes app. It becomes your starting point every single time.

How to Distill Research Before You Paste a Single Word

This is the step most people skip, and it is where quality dies. Raw research notes are usually a brain dump: quotes, links, half-formed ideas, and repetition. Pasting that straight in forces the AI to do your sorting work while juggling token limits. The result is shallow coverage or weird emphasis on minor points.

Instead, spend five minutes turning those notes into clean, numbered bullets. Group related ideas. Cut anything tangential. Keep each bullet to one clear insight or fact.

Here is a quick before-and-after using a fictional research topic many marketers face: “How prompt engineering improves content ROI.”

Messy notes:

- Study showed 40% faster drafting with good prompts (link)

- People over-prompt or under-prompt

- Claude better at long form than GPT sometimes

- My last campaign with better prompts had 2x engagement

- Audience hates jargon

Cleaned input bullets for the prompt:

- Recent internal test showed drafts took 40% less time when using structured prompts versus free-form ones.

- Most writers either paste entire research docs (over-prompting) or give vague instructions (under-prompting), both leading to generic output.

- Key insight from testing: Claude handles structured long-form better than GPT-4o for marketing blogs in our case.

- Campaign using refined prompts produced blog posts with 2x organic engagement and 35% higher time-on-page.

- Target readers are busy marketing managers who tune out jargon and want immediately actionable steps.

Now feed those five bullets into your template. The AI knows exactly what to emphasize, what to skip, and how the pieces connect. Quality jumps because the model is no longer guessing priorities.

How to Inject Your Brand Voice So the Draft Sounds Like You

Generic AI tone is the fastest way to make your content blend into the noise. The fix is not “write like me.” That rarely works because the model does not have enough of your writing to pattern-match accurately.

Three practical techniques consistently outperform vague instructions:

First, adjective stacking plus negatives. “Write in a conversational, direct, no-jargon tone that feels like a helpful colleague explaining something over coffee. Never sound like a textbook or corporate memo. Avoid phrases like ‘leverage synergies’ or ‘unlock value.’”

Second, few-shot examples. Paste one or two short samples of your own writing (a previous blog intro works perfectly) and say: “Match the style, sentence rhythm, and level of directness in the examples below.”

Third, audience mirroring. “Write the way my readers actually speak in their Slack channels and team meetings: practical, slightly skeptical, quick to call out hype.” This forces the AI to mirror real language patterns instead of default polished marketing speak.

If you want to go deeper, feed your best-performing blog posts into a separate prompt first and ask it to extract your voice guidelines (principles, vocabulary, cadence, what to avoid). Then reuse those guidelines in every future prompt.

Why the First Draft Is Never the Final One

Accept this truth: the first output is raw material, not the finished piece. That is normal and actually useful. Treat the AI like a writing partner in the room with you.

Effective follow-up prompts are short and surgical:

- “Make the introduction punchier and more direct. Cut the first two sentences and start with the strongest research insight.”

- “Expand section three with one practical example my reader can test this week.”

- “Rewrite paragraphs 4-6 to sound more conversational while keeping all the facts.”

- “Check the entire draft against the original research bullets and flag anything missing or off-topic.”

Each iteration takes seconds and compounds quality. After two or three targeted exchanges you usually have a draft that passes the checklist in the next section.

How to Know Your Draft Is Ready to Edit

Before you call it good and move on, run it through this quick four-point check:

- Structure matches the logical architecture of your original research bullets.

- Tone reads like your other published work (read it out loud; does it sound like you?).

- Every key research point appears exactly once in the right place.

- Placeholders exist for any stats, images, or links you still need to verify or add.

If it passes, you now have a workable draft. The heavy lifting is done. You can focus on the fun part: polishing, adding your unique insights, and making it publish-ready.

Your Research Is Now a Real Asset

A great prompt is not about tricking the AI. It is about giving the model the same clear brief you would give any skilled writer. When you distill research, define role and audience, lock in voice, and specify structure, the first draft stops being a surprise and starts being a solid foundation.

Your next step is simple. Grab the template from earlier, clean one set of research notes you already have sitting around, and run it through the full process. You will immediately feel the difference. You can also consider visiting Yarnit’s prompt library which has an enormous list of useful prompts for content generation.

Once that draft is ready, the real magic happens in the editing phase. Head over to the next post in this series where we turn that workable draft into something truly publish-worthy.

You already did the hard work of the research. Now your prompts can finally do justice to it.