UGC ads have always been a bit of a paradox. They perform insanely well, often driving 2–4x higher CTRs and stronger conversions than polished brand videos, but they’re a nightmare to produce at scale.

Every campaign turns into an operational loop: finding the right creators, coordinating shoots, managing revisions, reshooting when something misses the mark. The output works, but the process doesn’t. It’s slow, expensive, and hard to repeat consistently.

That’s the gap AI has quietly closed.

Today, you can create the same talking-head, UGC-style ads without the usual production overhead, no creator coordination, no shoot logistics, no editing bottlenecks. What used to take days now takes minutes, and the cost has dropped to the point where scaling variations is no longer a constraint.

And if you’ve been paying attention, you’ve already seen the shift—it’s playing out in your competitors’ ad libraries. So this guide isn't about which tool to use. It's about the craft that sits underneath the tool, how to choose the right avatar, write like a human, and build a workflow that produces results, not just videos.

Picking Your Avatar

Before you write a single line of script, you need to get the casting right. And in the world of AI talking heads, "casting" means understanding what makes a digital presenter believable, not just technically impressive.

1. The Two-Second Rule

Viewers form a credibility judgment within two seconds of a video starting. Everything rides on that window. What they're unconsciously scanning for isn't production quality, it's micro-signals of humanity: the slight shift of an eye line, a natural blink rate, the tiniest asymmetry in facial expression.

According to Zeely AI's 2026 roundup, the avatars that pass this test are those built with high-fidelity engines that blink and shift eye gaze naturally, not avatars that hold a fixed, forward stare. Start your avatar evaluation by watching the first two seconds of a sample video with fresh eyes. If it passes the gut check, keep going. If something feels off in frame one, your audience will feel it too.

2. What to Actually Evaluate

When assessing any avatar for a campaign, put it through these specific tests before committing:

• Lip-sync under stress: Feed it a script with product names, acronyms, dollar amounts, and dates. Some tools stumble here and instantly lose credibility.

• Dynamic range: Ask it to deliver a quiet, intimate line and then an energetic one. Avatars that handle tonal variation tend to handle everything else well too.

• Demographic alignment: The avatar should reflect your audience's world, not just look generically "presenter-like." Diverse representation and contextual fit both affect whether viewers feel the content is for them.

• The silent test: Watch the video with the sound off. Can you follow the emotion and intent just from expression and body language? If it's blank or robotic without audio, it won't hold up with it either.

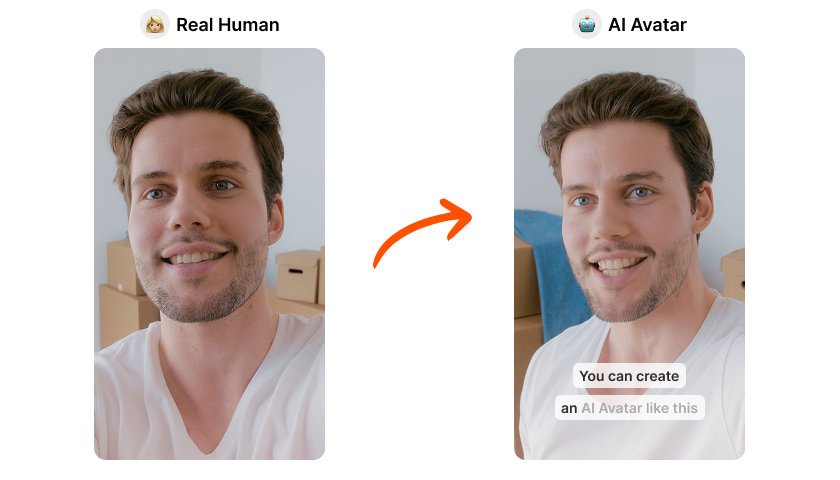

Custom Avatar vs. Stock Avatar: When Each Makes Sense

Stock avatars are fine for volume testing and for brands without an established spokesperson persona. But if you're building a content series or want a consistent face across campaigns, a custom avatar, a digital version of a real person or a branded character, creates continuity that stock can't replicate. Custom avatars are particularly valuable for brands that need to maintain a recognisable face across multiple videos without repeatedly filming content.

Once your avatar passes the test, the real work begins.

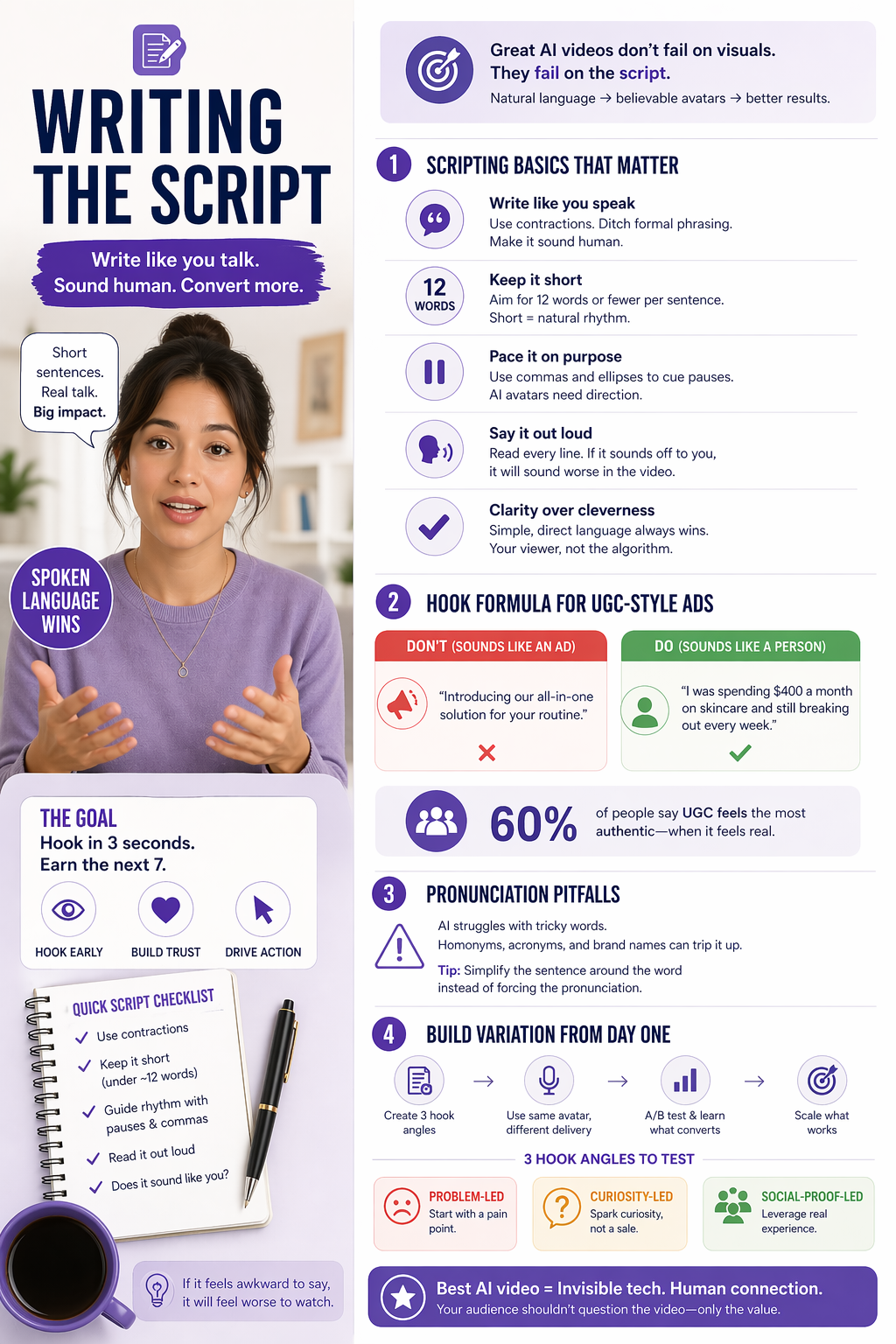

Writing the Script

Most AI talking head videos fail at the script level. The avatar is convincing. The lip sync is clean. But the language is formal, stiff, and structured like a press release because that's often what gets fed into the generator. The fix is deceptively simple: write like you talk.

The Core Principle: Spoken Language, Not Written Language

There's a meaningful difference between text that's meant to be read and text that's meant to be spoken. AI presenters are amplifiers, if your script feels robotic, your video will too. If it feels natural and conversational, the avatar will sound human. The key principle is simple: write like you talk, keep sentences short, and speak directly to the viewer.

Here are the structural rules that make the biggest difference:

• Use contractions always. "We're" not "we are." "You'll" not "you will." Formal constructions immediately signal that a human didn't write this.

• Keep sentences under 12 words where possible. Long sentences often feel robotic when delivered by AI. Short sentences create a natural rhythm.

• Use commas and ellipses to direct pacing. AI avatars need explicit cues for rhythm and pause, they don't ad-lib breaths the way humans do.

• Read every line out loud before finalising. If it feels clunky coming out of your mouth, it will sound worse coming out of a synthetic one.

The Hook Formula for UGC-Style Ads

The first three seconds of a UGC ad have one job: earn the next seven. And the content that earns that time is almost always a relatable problem, not a product claim.

Compare these two openers:

"Introducing [Product] — the all-in-one solution for your skin care routine." ← This sounds like an ad.

"I was spending $400 a month on skincare and still breaking out every week." ← This sounds like a person.

The second opener works because it triggers identification before it triggers defence. 60% of consumers consider UGC the most authentic form of marketing content, but only when it actually feels like it came from someone real.

Phonetic Traps and How to Avoid Them

AI voices handle standard language well. They handle edge cases poorly. Watch for homonyms ("read" vs "read"), technical acronyms, and foreign product names. A useful trick from Brightspace's avatar scripting lessons: if a word is being mispronounced, simplifying the surrounding sentence often works better than trying to fix the word itself phonetically. Restructure around the problem rather than fighting it.

Build Variation Into the Script from the Start

One of the biggest structural advantages of AI UGC is that you can test multiple script angles without a reshoot. A single avatar can deliver different hooks, different CTAs, different emotional tones, all from the same session. Cometly's generator breakdown highlights how deploying persona variations by mixing voices and tones, then running A/B tests, lets you refine ad scriptwriting until you find the messaging that converts, without coordination overhead.

Write your scripts in sets of three: one problem-led hook, one curiosity-led hook, one social-proof-led hook. Let the data tell you which one your audience responds to.

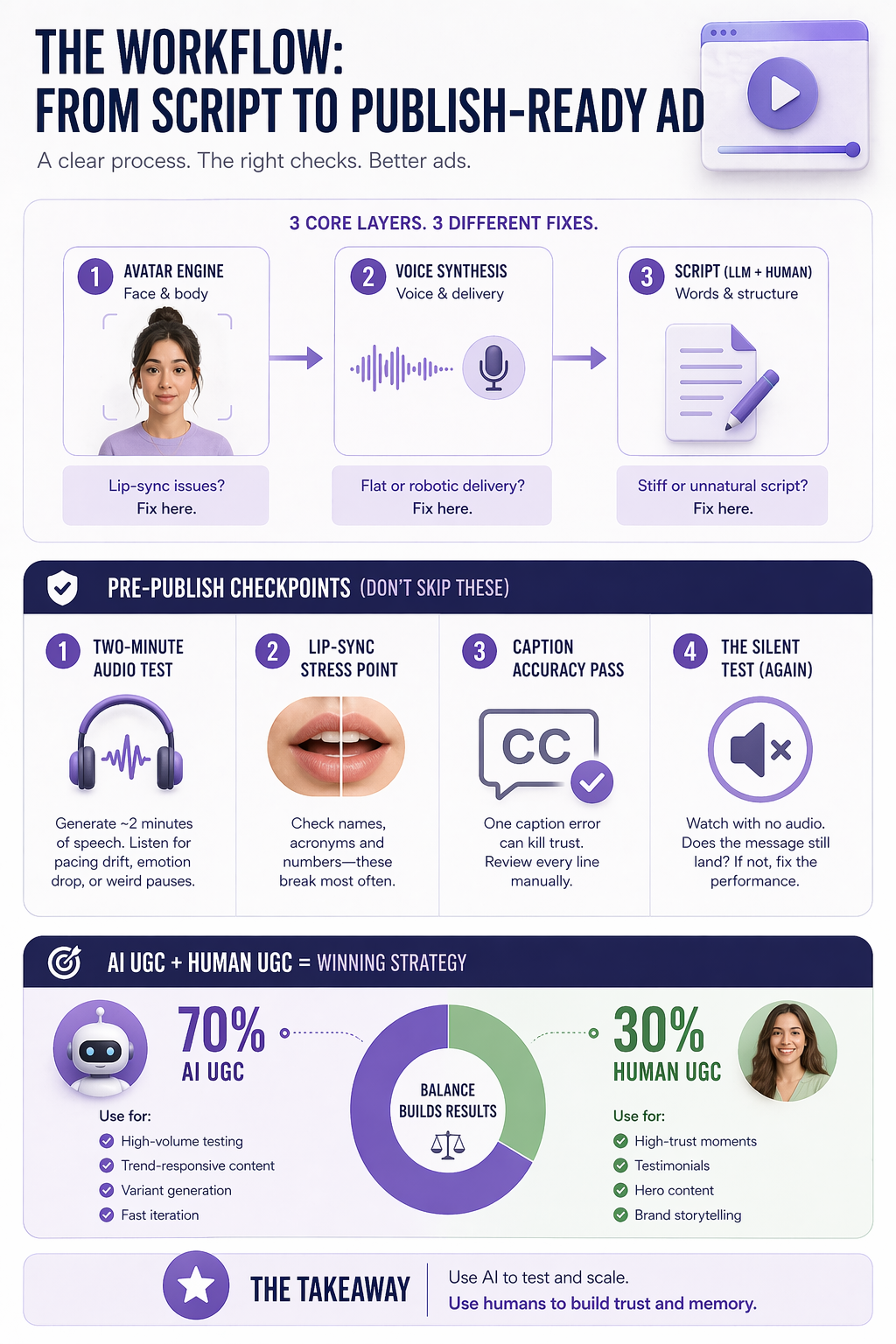

The Workflow: From Script to Publish-Ready Ad

In 2026, the most capable AI avatar platforms have converged around three integrated functions: a generative avatar engine (the face and body), a neural voice synthesis layer (the voice and delivery), and an LLM-assisted scriptwriting interface. Understanding which layer you're working with at any given moment helps you troubleshoot efficiently. A lip-sync problem is an avatar engine issue. A flat, toneless delivery is a voice synthesis issue. A stiff, unnatural script is an LLM or human writing issue. They have different fixes.

Before any video leaves your workflow, run it through these checkpoints:

• The two-minute audio test: Fish Audio's evaluation guide recommends generating at least two minutes of continuous speech and listening for pacing drift, emotional flattening, or unnatural pauses, common failure modes that only show up in longer content.

• Lip-sync stress point: Scrub to any moment where the avatar says a product name, acronym, or number. These are the moments most likely to break.

• Caption accuracy pass: Errors in on-screen text destroy trust faster than any visual glitch. Run every caption line manually.

• The silent test (again): Watch the final cut with no audio. Does the expression and pacing still communicate intent? If not, something in the performance layer needs adjustment.

The most effective strategy in 2026 isn't choosing between AI UGC and human UGC, it's knowing which to use for which job. A practical starting point: AI UGC for 70% of volume, high-frequency testing, trend-responsive content, variant generation, and traditional human UGC for 30% of high-trust moments: hero content, testimonials, and brand storytelling that requires embodied social proof.

The $2 Ad Is Only Worth It If the Craft Is There

The cost barrier to video advertising is effectively gone. What remains is the thing that will separate brands that win from brands that produce noise is judgment.

Judgment about which avatar passes the two-second believability test. Judgment about how to open a script so that a viewer feels seen rather than sold to. Judgment about when to run fifty AI variants and when to put a real person in front of a camera for the moments that require genuine social proof.

Build the workflow. Master the script. And remember: the best AI video is one your audience never thinks to question.

And this is exactly where things get interesting. We’re building toward AI video capabilities at Yarnit, designed not just to generate content, but to help you make the right calls on what should be created in the first place.